File size: 28,667 Bytes

8537242 9b7c23b 8537242 9b7c23b 8537242 9b7c23b 8537242 9b7c23b 8537242 9b7c23b 8537242 9b7c23b 8537242 9b7c23b 8537242 9b7c23b 8537242 9b7c23b 9843332 e18a102 9b7c23b 8537242 0d03fdb 8537242 9843332 8537242 9843332 8537242 0db3f6c 8537242 0d03fdb 8537242 0d03fdb 8537242 0db3f6c e18a102 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 313 314 315 316 317 318 319 320 321 322 323 324 325 326 327 328 329 330 331 332 333 334 335 336 337 338 339 340 341 342 343 344 345 346 347 348 349 350 351 352 353 354 355 356 357 358 359 360 361 362 363 364 365 366 367 368 369 370 371 372 373 374 375 376 377 378 379 380 381 382 383 384 385 386 387 388 389 390 391 392 393 394 395 396 397 398 399 400 401 402 403 404 405 406 407 408 409 410 411 412 413 414 415 416 417 418 419 420 421 422 423 424 425 426 427 428 429 430 431 432 433 434 435 436 437 438 439 440 441 442 443 444 445 446 447 448 449 450 451 452 453 454 455 456 457 458 459 460 461 462 463 464 465 466 467 468 469 470 471 472 473 474 475 476 477 478 479 480 481 482 483 484 485 486 487 488 489 490 491 492 493 494 495 496 497 498 499 500 501 502 503 504 505 506 507 508 509 510 511 512 513 514 515 516 517 518 519 520 521 522 523 524 525 526 527 528 529 530 531 532 533 534 535 536 537 538 539 540 541 542 543 544 545 546 547 548 549 550 551 552 553 554 555 556 557 558 559 560 561 562 563 564 565 566 567 568 569 570 571 572 573 574 575 576 577 578 579 580 581 582 583 584 585 586 587 588 589 590 591 592 593 594 595 596 597 598 599 600 601 602 603 604 605 606 607 608 609 610 611 612 613 614 615 616 617 618 619 620 621 622 623 624 625 626 627 628 629 630 631 632 633 634 635 636 637 638 639 640 641 642 643 644 645 646 647 648 649 650 651 652 653 654 655 656 657 658 659 660 661 662 663 664 665 666 667 668 669 670 671 672 673 674 675 676 677 678 679 680 681 |

---

annotations_creators:

- crowdsourced

- expert-generated

- found

- machine-generated

language_creators:

- crowdsourced

- expert-generated

- found

- machine-generated

languages:

- ar

- bg

- de

- el

- en

- es

- fr

- hi

- it

- nl

- pl

- pt

- ru

- sw

- th

- tr

- ur

- vi

- zh

licenses:

- cc-by-nc-4.0

- cc-by-sa-4.0

- other-Licence Universal Dependencies v2.5

- unknown

multilinguality:

- multilingual

- translation

size_categories:

- 100K<n<1M

- 10K<n<100K

source_datasets:

- extended|conll2003

- extended|squad

- extended|xnli

- original

task_categories:

- question-answering

- summarization

- text-classification

- text2text-generation

- token-classification

task_ids:

- acceptability-classification

- extractive-qa

- named-entity-recognition

- natural-language-inference

- news-articles-headline-generation

- open-domain-qa

- parsing

- text-classification-other-paraphrase-identification

- text2text-generation-other-question-answering

- topic-classification

paperswithcode_id: null

pretty_name: XGLUE

configs:

- mlqa

- nc

- ner

- ntg

- paws-x

- pos

- qadsm

- qam

- qg

- wpr

- xnli

---

# Dataset Card for XGLUE

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [XGLUE homepage](https://microsoft.github.io/XGLUE/)

- **Paper:** [XGLUE: A New Benchmark Dataset for Cross-lingual Pre-training, Understanding and Generation](https://arxiv.org/abs/1907.09190)

### Dataset Summary

XGLUE is a new benchmark dataset to evaluate the performance of cross-lingual pre-trained models with respect to cross-lingual natural language understanding and generation.

The training data of each task is in English while the validation and test data is present in multiple different languages.

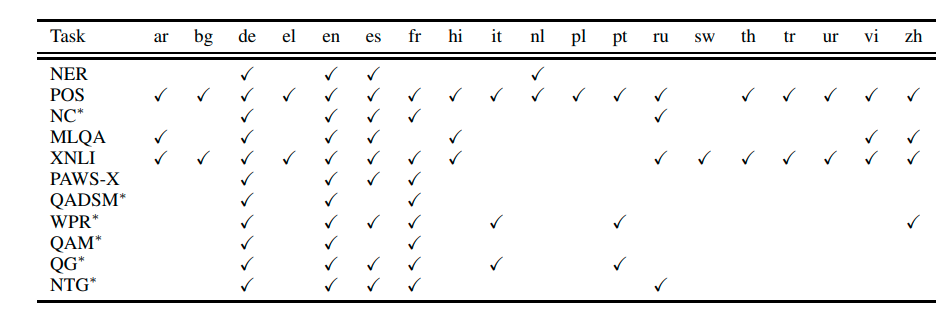

The following table shows which languages are present as validation and test data for each config.

Therefore, for each config, a cross-lingual pre-trained model should be fine-tuned on the English training data, and evaluated on for all languages.

### Supported Tasks and Leaderboards

The XGLUE leaderboard can be found on the [homepage](https://microsoft.github.io/XGLUE/) and

consits of a XGLUE-Understanding Score (the average of the tasks `ner`, `pos`, `mlqa`, `nc`, `xnli`, `paws-x`, `qadsm`, `wpr`, `qam`) and a XGLUE-Generation Score (the average of the tasks `qg`, `ntg`).

### Languages

For all tasks (configurations), the "train" split is in English (`en`).

For each task, the "validation" and "test" splits are present in these languages:

- ner: `en`, `de`, `es`, `nl`

- pos: `en`, `de`, `es`, `nl`, `bg`, `el`, `fr`, `pl`, `tr`, `vi`, `zh`, `ur`, `hi`, `it`, `ar`, `ru`, `th`

- mlqa: `en`, `de`, `ar`, `es`, `hi`, `vi`, `zh`

- nc: `en`, `de`, `es`, `fr`, `ru`

- xnli: `en`, `ar`, `bg`, `de`, `el`, `es`, `fr`, `hi`, `ru`, `sw`, `th`, `tr`, `ur`, `vi`, `zh`

- paws-x: `en`, `de`, `es`, `fr`

- qadsm: `en`, `de`, `fr`

- wpr: `en`, `de`, `es`, `fr`, `it`, `pt`, `zh`

- qam: `en`, `de`, `fr`

- qg: `en`, `de`, `es`, `fr`, `it`, `pt`

- ntg: `en`, `de`, `es`, `fr`, `ru`

## Dataset Structure

### Data Instances

#### ner

An example of 'test.nl' looks as follows.

```

{

"ner": [

"O",

"O",

"O",

"B-LOC",

"O",

"B-LOC",

"O",

"B-LOC",

"O",

"O",

"O",

"O",

"O",

"O",

"O",

"B-PER",

"I-PER",

"O",

"O",

"B-LOC",

"O",

"O"

],

"words": [

"Dat",

"is",

"in",

"Itali\u00eb",

",",

"Spanje",

"of",

"Engeland",

"misschien",

"geen",

"probleem",

",",

"maar",

"volgens",

"'",

"Der",

"Kaiser",

"'",

"in",

"Duitsland",

"wel",

"."

]

}

```

#### pos

An example of 'test.fr' looks as follows.

```

{

"pos": [

"PRON",

"VERB",

"SCONJ",

"ADP",

"PRON",

"CCONJ",

"DET",

"NOUN",

"ADP",

"NOUN",

"CCONJ",

"NOUN",

"ADJ",

"PRON",

"PRON",

"AUX",

"ADV",

"VERB",

"PUNCT",

"PRON",

"VERB",

"VERB",

"DET",

"ADJ",

"NOUN",

"ADP",

"DET",

"NOUN",

"PUNCT"

],

"words": [

"Je",

"sens",

"qu'",

"entre",

"\u00e7a",

"et",

"les",

"films",

"de",

"m\u00e9decins",

"et",

"scientifiques",

"fous",

"que",

"nous",

"avons",

"d\u00e9j\u00e0",

"vus",

",",

"nous",

"pourrions",

"emprunter",

"un",

"autre",

"chemin",

"pour",

"l'",

"origine",

"."

]

}

```

#### mlqa

An example of 'test.hi' looks as follows.

```

{

"answers": {

"answer_start": [

378

],

"text": [

"\u0909\u0924\u094d\u0924\u0930 \u092a\u0942\u0930\u094d\u0935"

]

},

"context": "\u0909\u0938\u0940 \"\u090f\u0930\u093f\u092f\u093e XX \" \u0928\u093e\u092e\u0915\u0930\u0923 \u092a\u094d\u0930\u0923\u093e\u0932\u0940 \u0915\u093e \u092a\u094d\u0930\u092f\u094b\u0917 \u0928\u0947\u0935\u093e\u0926\u093e \u092a\u0930\u0940\u0915\u094d\u0937\u0923 \u0938\u094d\u0925\u0932 \u0915\u0947 \u0905\u0928\u094d\u092f \u092d\u093e\u0917\u094b\u0902 \u0915\u0947 \u0932\u093f\u090f \u0915\u093f\u092f\u093e \u0917\u092f\u093e \u0939\u0948\u0964\u092e\u0942\u0932 \u0930\u0942\u092a \u092e\u0947\u0902 6 \u092c\u091f\u0947 10 \u092e\u0940\u0932 \u0915\u093e \u092f\u0939 \u0906\u092f\u0924\u093e\u0915\u093e\u0930 \u0905\u0921\u094d\u0921\u093e \u0905\u092c \u0924\u0925\u093e\u0915\u0925\u093f\u0924 '\u0917\u094d\u0930\u0942\u092e \u092c\u0949\u0915\u094d\u0938 \" \u0915\u093e \u090f\u0915 \u092d\u093e\u0917 \u0939\u0948, \u091c\u094b \u0915\u093f 23 \u092c\u091f\u0947 25.3 \u092e\u0940\u0932 \u0915\u093e \u090f\u0915 \u092a\u094d\u0930\u0924\u093f\u092c\u0902\u0927\u093f\u0924 \u0939\u0935\u093e\u0908 \u0915\u094d\u0937\u0947\u0924\u094d\u0930 \u0939\u0948\u0964 \u092f\u0939 \u0915\u094d\u0937\u0947\u0924\u094d\u0930 NTS \u0915\u0947 \u0906\u0902\u0924\u0930\u093f\u0915 \u0938\u0921\u093c\u0915 \u092a\u094d\u0930\u092c\u0902\u0927\u0928 \u0938\u0947 \u091c\u0941\u0921\u093c\u093e \u0939\u0948, \u091c\u093f\u0938\u0915\u0940 \u092a\u0915\u094d\u0915\u0940 \u0938\u0921\u093c\u0915\u0947\u0902 \u0926\u0915\u094d\u0937\u093f\u0923 \u092e\u0947\u0902 \u092e\u0930\u0915\u0930\u0940 \u0915\u0940 \u0913\u0930 \u0914\u0930 \u092a\u0936\u094d\u091a\u093f\u092e \u092e\u0947\u0902 \u092f\u0941\u0915\u094d\u0915\u093e \u092b\u094d\u0932\u0948\u091f \u0915\u0940 \u0913\u0930 \u091c\u093e\u0924\u0940 \u0939\u0948\u0902\u0964 \u091d\u0940\u0932 \u0938\u0947 \u0909\u0924\u094d\u0924\u0930 \u092a\u0942\u0930\u094d\u0935 \u0915\u0940 \u0913\u0930 \u092c\u0922\u093c\u0924\u0947 \u0939\u0941\u090f \u0935\u094d\u092f\u093e\u092a\u0915 \u0914\u0930 \u0914\u0930 \u0938\u0941\u0935\u094d\u092f\u0935\u0938\u094d\u0925\u093f\u0924 \u0917\u094d\u0930\u0942\u092e \u091d\u0940\u0932 \u0915\u0940 \u0938\u0921\u093c\u0915\u0947\u0902 \u090f\u0915 \u0926\u0930\u094d\u0930\u0947 \u0915\u0947 \u091c\u0930\u093f\u092f\u0947 \u092a\u0947\u091a\u0940\u0926\u093e \u092a\u0939\u093e\u0921\u093c\u093f\u092f\u094b\u0902 \u0938\u0947 \u0939\u094b\u0915\u0930 \u0917\u0941\u091c\u0930\u0924\u0940 \u0939\u0948\u0902\u0964 \u092a\u0939\u0932\u0947 \u0938\u0921\u093c\u0915\u0947\u0902 \u0917\u094d\u0930\u0942\u092e \u0918\u093e\u091f\u0940",

"question": "\u091d\u0940\u0932 \u0915\u0947 \u0938\u093e\u092a\u0947\u0915\u094d\u0937 \u0917\u094d\u0930\u0942\u092e \u0932\u0947\u0915 \u0930\u094b\u0921 \u0915\u0939\u093e\u0901 \u091c\u093e\u0924\u0940 \u0925\u0940?"

}

```

#### nc

An example of 'test.es' looks as follows.

```

{

"news_body": "El bizcocho es seguramente el producto m\u00e1s b\u00e1sico y sencillo de toda la reposter\u00eda : consiste en poco m\u00e1s que mezclar unos cuantos ingredientes, meterlos al horno y esperar a que se hagan. Por obra y gracia del impulsor qu\u00edmico, tambi\u00e9n conocido como \"levadura de tipo Royal\", despu\u00e9s de un rato de calorcito esta combinaci\u00f3n de harina, az\u00facar, huevo, grasa -aceite o mantequilla- y l\u00e1cteo se transforma en uno de los productos m\u00e1s deliciosos que existen para desayunar o merendar . Por muy manazas que seas, es m\u00e1s que probable que tu bizcocho casero supere en calidad a cualquier infamia industrial envasada. Para lograr un bizcocho digno de admiraci\u00f3n s\u00f3lo tienes que respetar unas pocas normas que afectan a los ingredientes, proporciones, mezclado, horneado y desmoldado. Todas las tienes resumidas en unos dos minutos el v\u00eddeo de arriba, en el que adem \u00e1s aprender\u00e1s alg\u00fan truquillo para que tu bizcochaco quede m\u00e1s fino, jugoso, esponjoso y amoroso. M\u00e1s en MSN:",

"news_category": "foodanddrink",

"news_title": "Cocina para lerdos: las leyes del bizcocho"

}

```

#### xnli

An example of 'validation.th' looks as follows.

```

{

"hypothesis": "\u0e40\u0e02\u0e32\u0e42\u0e17\u0e23\u0e2b\u0e32\u0e40\u0e40\u0e21\u0e48\u0e02\u0e2d\u0e07\u0e40\u0e02\u0e32\u0e2d\u0e22\u0e48\u0e32\u0e07\u0e23\u0e27\u0e14\u0e40\u0e23\u0e47\u0e27\u0e2b\u0e25\u0e31\u0e07\u0e08\u0e32\u0e01\u0e17\u0e35\u0e48\u0e23\u0e16\u0e42\u0e23\u0e07\u0e40\u0e23\u0e35\u0e22\u0e19\u0e2a\u0e48\u0e07\u0e40\u0e02\u0e32\u0e40\u0e40\u0e25\u0e49\u0e27",

"label": 1,

"premise": "\u0e41\u0e25\u0e30\u0e40\u0e02\u0e32\u0e1e\u0e39\u0e14\u0e27\u0e48\u0e32, \u0e21\u0e48\u0e32\u0e21\u0e4a\u0e32 \u0e1c\u0e21\u0e2d\u0e22\u0e39\u0e48\u0e1a\u0e49\u0e32\u0e19"

}

```

#### paws-x

An example of 'test.es' looks as follows.

```

{

"label": 1,

"sentence1": "La excepci\u00f3n fue entre fines de 2005 y 2009 cuando jug\u00f3 en Suecia con Carlstad United BK, Serbia con FK Borac \u010ca\u010dak y el FC Terek Grozny de Rusia.",

"sentence2": "La excepci\u00f3n se dio entre fines del 2005 y 2009, cuando jug\u00f3 con Suecia en el Carlstad United BK, Serbia con el FK Borac \u010ca\u010dak y el FC Terek Grozny de Rusia."

}

```

#### qadsm

An example of 'train' looks as follows.

```

{

"ad_description": "Your New England Cruise Awaits! Holland America Line Official Site.",

"ad_title": "New England Cruises",

"query": "cruise portland maine",

"relevance_label": 1

}

```

#### wpr

An example of 'test.zh' looks as follows.

```

{

"query": "maxpro\u5b98\u7f51",

"relavance_label": 0,

"web_page_snippet": "\u5728\u7ebf\u8d2d\u4e70\uff0c\u552e\u540e\u670d\u52a1\u3002vivo\u667a\u80fd\u624b\u673a\u5f53\u5b63\u660e\u661f\u673a\u578b\u6709NEX\uff0cvivo X21\uff0cvivo X20\uff0c\uff0cvivo X23\u7b49\uff0c\u5728vivo\u5b98\u7f51\u8d2d\u4e70\u624b\u673a\u53ef\u4ee5\u4eab\u53d712 \u671f\u514d\u606f\u4ed8\u6b3e\u3002 \u54c1\u724c Funtouch OS \u4f53\u9a8c\u5e97 | ...",

"wed_page_title": "vivo\u667a\u80fd\u624b\u673a\u5b98\u65b9\u7f51\u7ad9-AI\u975e\u51e1\u6444\u5f71X23"

}

```

#### qam

An example of 'validation.en' looks as follows.

```

{

"annswer": "Erikson has stated that after the last novel of the Malazan Book of the Fallen was finished, he and Esslemont would write a comprehensive guide tentatively named The Encyclopaedia Malazica.",

"label": 0,

"question": "main character of malazan book of the fallen"

}

```

#### qg

An example of 'test.de' looks as follows.

```

{

"answer_passage": "Medien bei WhatsApp automatisch speichern. Tippen Sie oben rechts unter WhatsApp auf die drei Punkte oder auf die Men\u00fc-Taste Ihres Smartphones. Dort wechseln Sie in die \"Einstellungen\" und von hier aus weiter zu den \"Chat-Einstellungen\". Unter dem Punkt \"Medien Auto-Download\" k\u00f6nnen Sie festlegen, wann die WhatsApp-Bilder heruntergeladen werden sollen.",

"question": "speichenn von whats app bilder unterbinden"

}

```

#### ntg

An example of 'test.en' looks as follows.

```

{

"news_body": "Check out this vintage Willys Pickup! As they say, the devil is in the details, and it's not every day you see such attention paid to every last area of a restoration like with this 1961 Willys Pickup . Already the Pickup has a unique look that shares some styling with the Jeep, plus some original touches you don't get anywhere else. It's a classy way to show up to any event, all thanks to Hollywood Motors . A burgundy paint job contrasts with white lower panels and the roof. Plenty of tasteful chrome details grace the exterior, including the bumpers, headlight bezels, crossmembers on the grille, hood latches, taillight bezels, exhaust finisher, tailgate hinges, etc. Steel wheels painted white and chrome hubs are a tasteful addition. Beautiful oak side steps and bed strips add a touch of craftsmanship to this ride. This truck is of real showroom quality, thanks to the astoundingly detailed restoration work performed on it, making this Willys Pickup a fierce contender for best of show. Under that beautiful hood is a 225 Buick V6 engine mated to a three-speed manual transmission, so you enjoy an ideal level of control. Four wheel drive is functional, making it that much more utilitarian and downright cool. The tires are new, so you can enjoy a lot of life out of them, while the wheels and hubs are in great condition. Just in case, a fifth wheel with a tire and a side mount are included. Just as important, this Pickup runs smoothly, so you can go cruising or even hit the open road if you're interested in participating in some classic rallies. You might associate Willys with the famous Jeep CJ, but the automaker did produce a fair amount of trucks. The Pickup is quite the unique example, thanks to distinct styling that really turns heads, making it a favorite at quite a few shows. Source: Hollywood Motors Check These Rides Out Too: Fear No Trails With These Off-Roaders 1965 Pontiac GTO: American Icon For Sale In Canada Low-Mileage 1955 Chevy 3100 Represents Turn In Pickup Market",

"news_title": "This 1961 Willys Pickup Will Let You Cruise In Style"

}

```

### Data Fields

#### ner

In the following each data field in ner is explained. The data fields are the same among all splits.

- `words`: a list of words composing the sentence.

- `ner`: a list of entitity classes corresponding to each word respectively.

#### pos

In the following each data field in pos is explained. The data fields are the same among all splits.

- `words`: a list of words composing the sentence.

- `pos`: a list of "part-of-speech" classes corresponding to each word respectively.

#### mlqa

In the following each data field in mlqa is explained. The data fields are the same among all splits.

- `context`: a string, the context containing the answer.

- `question`: a string, the question to be answered.

- `answers`: a string, the answer to `question`.

#### nc

In the following each data field in nc is explained. The data fields are the same among all splits.

- `news_title`: a string, to the title of the news report.

- `news_body`: a string, to the actual news report.

- `news_category`: a string, the category of the news report, *e.g.* `foodanddrink`

#### xnli

In the following each data field in xnli is explained. The data fields are the same among all splits.

- `premise`: a string, the context/premise, *i.e.* the first sentence for natural language inference.

- `hypothesis`: a string, a sentence whereas its relation to `premise` is to be classified, *i.e.* the second sentence for natural language inference.

- `label`: a class catory (int), natural language inference relation class between `hypothesis` and `premise`. One of 0: entailment, 1: contradiction, 2: neutral.

#### paws-x

In the following each data field in paws-x is explained. The data fields are the same among all splits.

- `sentence1`: a string, a sentence.

- `sentence2`: a string, a sentence whereas the sentence is either a paraphrase of `sentence1` or not.

- `label`: a class label (int), whether `sentence2` is a paraphrase of `sentence1` One of 0: different, 1: same.

#### qadsm

In the following each data field in qadsm is explained. The data fields are the same among all splits.

- `query`: a string, the search query one would insert into a search engine.

- `ad_title`: a string, the title of the advertisement.

- `ad_description`: a string, the content of the advertisement, *i.e.* the main body.

- `relevance_label`: a class label (int), how relevant the advertisement `ad_title` + `ad_description` is to the search query `query`. One of 0: Bad, 1: Good.

#### wpr

In the following each data field in wpr is explained. The data fields are the same among all splits.

- `query`: a string, the search query one would insert into a search engine.

- `web_page_title`: a string, the title of a web page.

- `web_page_snippet`: a string, the content of a web page, *i.e.* the main body.

- `relavance_label`: a class label (int), how relevant the web page `web_page_snippet` + `web_page_snippet` is to the search query `query`. One of 0: Bad, 1: Fair, 2: Good, 3: Excellent, 4: Perfect.

#### qam

In the following each data field in qam is explained. The data fields are the same among all splits.

- `question`: a string, a question.

- `answer`: a string, a possible answer to `question`.

- `label`: a class label (int), whether the `answer` is relevant to the `question`. One of 0: False, 1: True.

#### qg

In the following each data field in qg is explained. The data fields are the same among all splits.

- `answer_passage`: a string, a detailed answer to the `question`.

- `question`: a string, a question.

#### ntg

In the following each data field in ntg is explained. The data fields are the same among all splits.

- `news_body`: a string, the content of a news article.

- `news_title`: a string, the title corresponding to the news article `news_body`.

### Data Splits

#### ner

The following table shows the number of data samples/number of rows for each split in ner.

| |train|validation.en|validation.de|validation.es|validation.nl|test.en|test.de|test.es|test.nl|

|---|----:|------------:|------------:|------------:|------------:|------:|------:|------:|------:|

|ner|14042| 3252| 2874| 1923| 2895| 3454| 3007| 1523| 5202|

#### pos

The following table shows the number of data samples/number of rows for each split in pos.

| |train|validation.en|validation.de|validation.es|validation.nl|validation.bg|validation.el|validation.fr|validation.pl|validation.tr|validation.vi|validation.zh|validation.ur|validation.hi|validation.it|validation.ar|validation.ru|validation.th|test.en|test.de|test.es|test.nl|test.bg|test.el|test.fr|test.pl|test.tr|test.vi|test.zh|test.ur|test.hi|test.it|test.ar|test.ru|test.th|

|---|----:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|

|pos|25376| 2001| 798| 1399| 717| 1114| 402| 1475| 2214| 987| 799| 499| 551| 1658| 563| 908| 578| 497| 2076| 976| 425| 595| 1115| 455| 415| 2214| 982| 799| 499| 534| 1683| 481| 679| 600| 497|

#### mlqa

The following table shows the number of data samples/number of rows for each split in mlqa.

| |train|validation.en|validation.de|validation.ar|validation.es|validation.hi|validation.vi|validation.zh|test.en|test.de|test.ar|test.es|test.hi|test.vi|test.zh|

|----|----:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------:|------:|------:|------:|------:|------:|------:|

|mlqa|87599| 1148| 512| 517| 500| 507| 511| 504| 11590| 4517| 5335| 5253| 4918| 5495| 5137|

#### nc

The following table shows the number of data samples/number of rows for each split in nc.

| |train |validation.en|validation.de|validation.es|validation.fr|validation.ru|test.en|test.de|test.es|test.fr|test.ru|

|---|-----:|------------:|------------:|------------:|------------:|------------:|------:|------:|------:|------:|------:|

|nc |100000| 10000| 10000| 10000| 10000| 10000| 10000| 10000| 10000| 10000| 10000|

#### xnli

The following table shows the number of data samples/number of rows for each split in xnli.

| |train |validation.en|validation.ar|validation.bg|validation.de|validation.el|validation.es|validation.fr|validation.hi|validation.ru|validation.sw|validation.th|validation.tr|validation.ur|validation.vi|validation.zh|test.en|test.ar|test.bg|test.de|test.el|test.es|test.fr|test.hi|test.ru|test.sw|test.th|test.tr|test.ur|test.vi|test.zh|

|----|-----:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|

|xnli|392702| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 5010| 5010| 5010| 5010| 5010| 5010| 5010| 5010| 5010| 5010| 5010| 5010| 5010| 5010| 5010|

#### nc

The following table shows the number of data samples/number of rows for each split in nc.

| |train |validation.en|validation.de|validation.es|validation.fr|validation.ru|test.en|test.de|test.es|test.fr|test.ru|

|---|-----:|------------:|------------:|------------:|------------:|------------:|------:|------:|------:|------:|------:|

|nc |100000| 10000| 10000| 10000| 10000| 10000| 10000| 10000| 10000| 10000| 10000|

#### xnli

The following table shows the number of data samples/number of rows for each split in xnli.

| |train |validation.en|validation.ar|validation.bg|validation.de|validation.el|validation.es|validation.fr|validation.hi|validation.ru|validation.sw|validation.th|validation.tr|validation.ur|validation.vi|validation.zh|test.en|test.ar|test.bg|test.de|test.el|test.es|test.fr|test.hi|test.ru|test.sw|test.th|test.tr|test.ur|test.vi|test.zh|

|----|-----:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|------:|

|xnli|392702| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 2490| 5010| 5010| 5010| 5010| 5010| 5010| 5010| 5010| 5010| 5010| 5010| 5010| 5010| 5010| 5010|

#### paws-x

The following table shows the number of data samples/number of rows for each split in paws-x.

| |train|validation.en|validation.de|validation.es|validation.fr|test.en|test.de|test.es|test.fr|

|------|----:|------------:|------------:|------------:|------------:|------:|------:|------:|------:|

|paws-x|49401| 2000| 2000| 2000| 2000| 2000| 2000| 2000| 2000|

#### qadsm

The following table shows the number of data samples/number of rows for each split in qadsm.

| |train |validation.en|validation.de|validation.fr|test.en|test.de|test.fr|

|-----|-----:|------------:|------------:|------------:|------:|------:|------:|

|qadsm|100000| 10000| 10000| 10000| 10000| 10000| 10000|

#### wpr

The following table shows the number of data samples/number of rows for each split in wpr.

| |train|validation.en|validation.de|validation.es|validation.fr|validation.it|validation.pt|validation.zh|test.en|test.de|test.es|test.fr|test.it|test.pt|test.zh|

|---|----:|------------:|------------:|------------:|------------:|------------:|------------:|------------:|------:|------:|------:|------:|------:|------:|------:|

|wpr|99997| 10008| 10004| 10004| 10005| 10003| 10001| 10002| 10004| 9997| 10006| 10020| 10001| 10015| 9999|

#### qam

The following table shows the number of data samples/number of rows for each split in qam.

| |train |validation.en|validation.de|validation.fr|test.en|test.de|test.fr|

|---|-----:|------------:|------------:|------------:|------:|------:|------:|

|qam|100000| 10000| 10000| 10000| 10000| 10000| 10000|

#### qg

The following table shows the number of data samples/number of rows for each split in qg.

| |train |validation.en|validation.de|validation.es|validation.fr|validation.it|validation.pt|test.en|test.de|test.es|test.fr|test.it|test.pt|

|---|-----:|------------:|------------:|------------:|------------:|------------:|------------:|------:|------:|------:|------:|------:|------:|

|qg |100000| 10000| 10000| 10000| 10000| 10000| 10000| 10000| 10000| 10000| 10000| 10000| 10000|

#### ntg

The following table shows the number of data samples/number of rows for each split in ntg.

| |train |validation.en|validation.de|validation.es|validation.fr|validation.ru|test.en|test.de|test.es|test.fr|test.ru|

|---|-----:|------------:|------------:|------------:|------------:|------------:|------:|------:|------:|------:|------:|

|ntg|300000| 10000| 10000| 10000| 10000| 10000| 10000| 10000| 10000| 10000| 10000|

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

[More Information Needed]

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

The dataset is maintained mainly by Yaobo Liang, Yeyun Gong, Nan Duan, Ming Gong, Linjun Shou, and Daniel Campos from Microsoft Research.

### Licensing Information

The licensing status of the dataset hinges on the legal status of [XGLUE](https://microsoft.github.io/XGLUE/) hich is unclear.

### Citation Information

```

@article{Liang2020XGLUEAN,

title={XGLUE: A New Benchmark Dataset for Cross-lingual Pre-training, Understanding and Generation},

author={Yaobo Liang and Nan Duan and Yeyun Gong and Ning Wu and Fenfei Guo and Weizhen Qi and Ming Gong and Linjun Shou and Daxin Jiang and Guihong Cao and Xiaodong Fan and Ruofei Zhang and Rahul Agrawal and Edward Cui and Sining Wei and Taroon Bharti and Ying Qiao and Jiun-Hung Chen and Winnie Wu and Shuguang Liu and Fan Yang and Daniel Campos and Rangan Majumder and Ming Zhou},

journal={arXiv},

year={2020},

volume={abs/2004.01401}

}

```

### Contributions

Thanks to [@patrickvonplaten](https://github.com/patrickvonplaten) for adding this dataset.

|