dev: uploaded model files

Browse files- cat_dog_classifier.bin +3 -0

- config.json +8 -0

- model.py +13 -0

- quickdraw_data/cat.npy +3 -0

- quickdraw_data/dog.npy +3 -0

- requirements.txt +6 -0

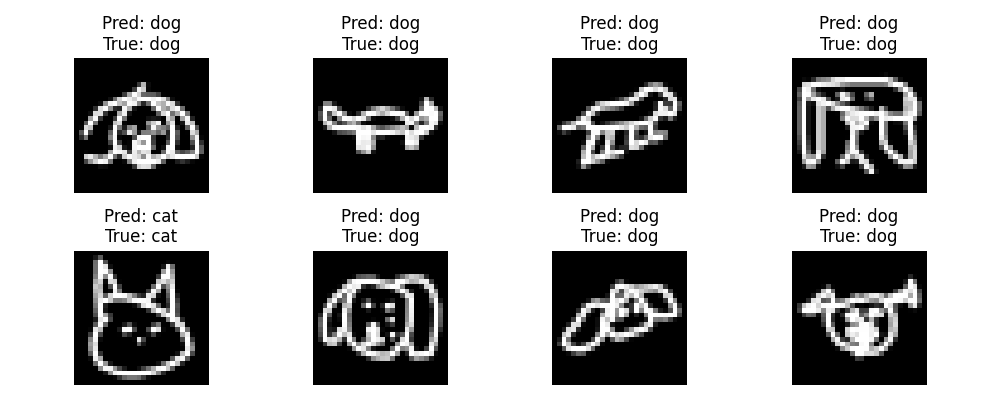

- sample_predictions.png +0 -0

- train_cat_dog_classifier.py +310 -0

cat_dog_classifier.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:6be6dd4dbb80eb2824563dd9237b63a582a57482130e0494642e4e06ece39728

|

| 3 |

+

size 1685764

|

config.json

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"batch_size": 64,

|

| 3 |

+

"num_epochs": 5,

|

| 4 |

+

"learning_rate": 0.001,

|

| 5 |

+

"model_save_path": "cat_dog_classifier.bin",

|

| 6 |

+

"data_path": "quickdraw_data",

|

| 7 |

+

"num_samples": 5000

|

| 8 |

+

}

|

model.py

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import torch.nn as nn

|

| 2 |

+

import torch

|

| 3 |

+

|

| 4 |

+

class SimpleModel(nn.Module):

|

| 5 |

+

def __init__(self):

|

| 6 |

+

super(SimpleModel, self).__init__()

|

| 7 |

+

self.fc1 = nn.Linear(784, 128)

|

| 8 |

+

self.fc2 = nn.Linear(128, 2)

|

| 9 |

+

|

| 10 |

+

def forward(self, x):

|

| 11 |

+

x = torch.relu(self.fc1(x))

|

| 12 |

+

x = self.fc2(x)

|

| 13 |

+

return x

|

quickdraw_data/cat.npy

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:21a281839d3f2eef601d57d2338a4eafdf24649f8d0a0e42d3ec3e595911463e

|

| 3 |

+

size 96590448

|

quickdraw_data/dog.npy

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:72f95508d440976a075e7098557647bbdeaea7a06c63889215c5b87fbf82ea2c

|

| 3 |

+

size 119292736

|

requirements.txt

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

torch==2.0.0

|

| 2 |

+

numpy==1.24.3

|

| 3 |

+

requests==2.31.0

|

| 4 |

+

Pillow==9.4.0

|

| 5 |

+

matplotlib==3.8.0

|

| 6 |

+

scikit-learn==1.3.0

|

sample_predictions.png

ADDED

|

train_cat_dog_classifier.py

ADDED

|

@@ -0,0 +1,310 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import os

|

| 2 |

+

import json

|

| 3 |

+

import numpy as np

|

| 4 |

+

import torch

|

| 5 |

+

from torch.utils.data import TensorDataset, DataLoader, random_split

|

| 6 |

+

import torch.nn as nn

|

| 7 |

+

import torch.nn.functional as F

|

| 8 |

+

import torch.optim as optim

|

| 9 |

+

from PIL import Image

|

| 10 |

+

import requests

|

| 11 |

+

import matplotlib.pyplot as plt

|

| 12 |

+

|

| 13 |

+

# Ensure that matplotlib does not try to open a window (useful if running on a server)

|

| 14 |

+

import matplotlib

|

| 15 |

+

matplotlib.use('Agg')

|

| 16 |

+

|

| 17 |

+

# Check if CUDA is available

|

| 18 |

+

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

|

| 19 |

+

print(f'Using device: {device}')

|

| 20 |

+

|

| 21 |

+

def load_config(config_file='config.json'):

|

| 22 |

+

"""

|

| 23 |

+

Loads configuration parameters from a JSON file.

|

| 24 |

+

Args:

|

| 25 |

+

config_file (str): Path to the JSON config file.

|

| 26 |

+

Returns:

|

| 27 |

+

config (dict): Dictionary containing configuration parameters.

|

| 28 |

+

"""

|

| 29 |

+

with open(config_file, 'r') as f:

|

| 30 |

+

return json.load(f)

|

| 31 |

+

|

| 32 |

+

def download_quickdraw_data():

|

| 33 |

+

"""

|

| 34 |

+

Downloads 'cat.npy' and 'dog.npy' files from the Quick, Draw! dataset.

|

| 35 |

+

"""

|

| 36 |

+

os.makedirs('quickdraw_data', exist_ok=True)

|

| 37 |

+

base_url = 'https://storage.googleapis.com/quickdraw_dataset/full/numpy_bitmap/'

|

| 38 |

+

|

| 39 |

+

categories = ['cat', 'dog']

|

| 40 |

+

for category in categories:

|

| 41 |

+

url = f"{base_url}{category}.npy"

|

| 42 |

+

save_path = os.path.join('quickdraw_data', f"{category}.npy")

|

| 43 |

+

|

| 44 |

+

if os.path.exists(save_path):

|

| 45 |

+

print(f"{category}.npy already exists, skipping download.")

|

| 46 |

+

continue

|

| 47 |

+

|

| 48 |

+

print(f"Downloading {category}.npy...")

|

| 49 |

+

response = requests.get(url, stream=True)

|

| 50 |

+

if response.status_code == 200:

|

| 51 |

+

with open(save_path, 'wb') as f:

|

| 52 |

+

for chunk in response.iter_content(chunk_size=8192):

|

| 53 |

+

f.write(chunk)

|

| 54 |

+

print(f"Downloaded {category}.npy")

|

| 55 |

+

else:

|

| 56 |

+

print(f"Failed to download {category}.npy. Status code: {response.status_code}")

|

| 57 |

+

|

| 58 |

+

def load_and_preprocess_data(num_samples=5000):

|

| 59 |

+

"""

|

| 60 |

+

Loads and preprocesses the data for 'cat' and 'dog' categories.

|

| 61 |

+

Args:

|

| 62 |

+

num_samples (int): Number of samples to load for each category.

|

| 63 |

+

Returns:

|

| 64 |

+

train_loader, test_loader: DataLoaders for training and testing.

|

| 65 |

+

"""

|

| 66 |

+

# Load data

|

| 67 |

+

cat_data = np.load('quickdraw_data/cat.npy')

|

| 68 |

+

dog_data = np.load('quickdraw_data/dog.npy')

|

| 69 |

+

|

| 70 |

+

# Limit the number of samples

|

| 71 |

+

cat_data = cat_data[:num_samples]

|

| 72 |

+

dog_data = dog_data[:num_samples]

|

| 73 |

+

|

| 74 |

+

# Create labels: 0 for cat, 1 for dog

|

| 75 |

+

cat_labels = np.zeros(len(cat_data), dtype=np.int64)

|

| 76 |

+

dog_labels = np.ones(len(dog_data), dtype=np.int64)

|

| 77 |

+

|

| 78 |

+

# Combine data and labels

|

| 79 |

+

data = np.concatenate((cat_data, dog_data), axis=0)

|

| 80 |

+

labels = np.concatenate((cat_labels, dog_labels), axis=0)

|

| 81 |

+

|

| 82 |

+

# Normalize data

|

| 83 |

+

data = data.astype('float32') / 255.0

|

| 84 |

+

|

| 85 |

+

# Reshape data for PyTorch: (batch_size, channels, height, width)

|

| 86 |

+

data = data.reshape(-1, 1, 28, 28)

|

| 87 |

+

|

| 88 |

+

# Convert to PyTorch tensors

|

| 89 |

+

data_tensor = torch.tensor(data)

|

| 90 |

+

labels_tensor = torch.tensor(labels)

|

| 91 |

+

|

| 92 |

+

# Create a TensorDataset

|

| 93 |

+

dataset = TensorDataset(data_tensor, labels_tensor)

|

| 94 |

+

|

| 95 |

+

# Split dataset into training and testing sets (80% train, 20% test)

|

| 96 |

+

train_size = int(0.8 * len(dataset))

|

| 97 |

+

test_size = len(dataset) - train_size

|

| 98 |

+

|

| 99 |

+

train_dataset, test_dataset = random_split(dataset, [train_size, test_size])

|

| 100 |

+

|

| 101 |

+

# Create DataLoaders

|

| 102 |

+

config = load_config()

|

| 103 |

+

batch_size = config['batch_size']

|

| 104 |

+

|

| 105 |

+

train_loader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

|

| 106 |

+

test_loader = DataLoader(test_dataset, batch_size=batch_size)

|

| 107 |

+

|

| 108 |

+

return train_loader, test_loader

|

| 109 |

+

|

| 110 |

+

class SimpleCNN(nn.Module):

|

| 111 |

+

"""

|

| 112 |

+

Defines a simple Convolutional Neural Network for binary classification.

|

| 113 |

+

"""

|

| 114 |

+

def __init__(self):

|

| 115 |

+

super(SimpleCNN, self).__init__()

|

| 116 |

+

# Convolutional layers

|

| 117 |

+

self.conv1 = nn.Conv2d(1, 32, kernel_size=3, padding=1)

|

| 118 |

+

self.pool = nn.MaxPool2d(2, 2)

|

| 119 |

+

self.conv2 = nn.Conv2d(32, 64, kernel_size=3, padding=1)

|

| 120 |

+

# Fully connected layers

|

| 121 |

+

self.fc1 = nn.Linear(64 * 7 * 7, 128)

|

| 122 |

+

self.fc2 = nn.Linear(128, 2) # 2 output classes: cat and dog

|

| 123 |

+

|

| 124 |

+

def forward(self, x):

|

| 125 |

+

x = F.relu(self.conv1(x)) # Convolutional layer 1

|

| 126 |

+

x = self.pool(x) # Max pooling

|

| 127 |

+

x = F.relu(self.conv2(x)) # Convolutional layer 2

|

| 128 |

+

x = self.pool(x) # Max pooling

|

| 129 |

+

x = x.view(-1, 64 * 7 * 7) # Flatten

|

| 130 |

+

x = F.relu(self.fc1(x)) # Fully connected layer 1

|

| 131 |

+

x = self.fc2(x) # Output layer

|

| 132 |

+

return x

|

| 133 |

+

|

| 134 |

+

def train_model(model, train_loader, num_epochs=5, learning_rate=0.001):

|

| 135 |

+

"""

|

| 136 |

+

Trains the model using the training DataLoader.

|

| 137 |

+

Args:

|

| 138 |

+

model: The neural network model to train.

|

| 139 |

+

train_loader: DataLoader for the training data.

|

| 140 |

+

num_epochs (int): Number of epochs to train.

|

| 141 |

+

learning_rate (float): Learning rate for the optimizer.

|

| 142 |

+

"""

|

| 143 |

+

criterion = nn.CrossEntropyLoss()

|

| 144 |

+

optimizer = optim.Adam(model.parameters(), lr=learning_rate)

|

| 145 |

+

|

| 146 |

+

model.train()

|

| 147 |

+

for epoch in range(num_epochs):

|

| 148 |

+

running_loss = 0.0

|

| 149 |

+

|

| 150 |

+

for images, labels in train_loader:

|

| 151 |

+

images = images.to(device)

|

| 152 |

+

labels = labels.to(device)

|

| 153 |

+

|

| 154 |

+

# Zero the parameter gradients

|

| 155 |

+

optimizer.zero_grad()

|

| 156 |

+

|

| 157 |

+

# Forward pass

|

| 158 |

+

outputs = model(images)

|

| 159 |

+

loss = criterion(outputs, labels)

|

| 160 |

+

|

| 161 |

+

# Backward pass and optimize

|

| 162 |

+

loss.backward()

|

| 163 |

+

optimizer.step()

|

| 164 |

+

|

| 165 |

+

running_loss += loss.item() * images.size(0)

|

| 166 |

+

|

| 167 |

+

epoch_loss = running_loss / len(train_loader.dataset)

|

| 168 |

+

print(f'Epoch [{epoch+1}/{num_epochs}], Loss: {epoch_loss:.4f}')

|

| 169 |

+

|

| 170 |

+

def evaluate_model(model, test_loader):

|

| 171 |

+

"""

|

| 172 |

+

Evaluates the model on the test DataLoader.

|

| 173 |

+

Args:

|

| 174 |

+

model: The trained neural network model.

|

| 175 |

+

test_loader: DataLoader for the test data.

|

| 176 |

+

"""

|

| 177 |

+

model.eval()

|

| 178 |

+

correct = 0

|

| 179 |

+

total = 0

|

| 180 |

+

|

| 181 |

+

with torch.no_grad():

|

| 182 |

+

for images, labels in test_loader:

|

| 183 |

+

images = images.to(device)

|

| 184 |

+

labels = labels.to(device)

|

| 185 |

+

|

| 186 |

+

outputs = model(images)

|

| 187 |

+

_, predicted = torch.max(outputs.data, 1)

|

| 188 |

+

|

| 189 |

+

total += labels.size(0)

|

| 190 |

+

correct += (predicted == labels).sum().item()

|

| 191 |

+

|

| 192 |

+

accuracy = 100 * correct / total

|

| 193 |

+

print(f'Test Accuracy: {accuracy:.2f}%')

|

| 194 |

+

|

| 195 |

+

def save_model(model, filepath='cat_dog_classifier.pth'):

|

| 196 |

+

"""

|

| 197 |

+

Saves the trained model to a file.

|

| 198 |

+

Args:

|

| 199 |

+

model: The trained neural network model.

|

| 200 |

+

filepath (str): The path where the model will be saved.

|

| 201 |

+

"""

|

| 202 |

+

torch.save(model.state_dict(), filepath)

|

| 203 |

+

print(f'Model saved to {filepath}')

|

| 204 |

+

|

| 205 |

+

def load_model(model, filepath='cat_dog_classifier.pth'):

|

| 206 |

+

"""

|

| 207 |

+

Loads the model parameters from a file.

|

| 208 |

+

Args:

|

| 209 |

+

model: The neural network model to load parameters into.

|

| 210 |

+

filepath (str): The path to the saved model file.

|

| 211 |

+

"""

|

| 212 |

+

model.load_state_dict(torch.load(filepath, map_location=device))

|

| 213 |

+

model.to(device)

|

| 214 |

+

print(f'Model loaded from {filepath}')

|

| 215 |

+

|

| 216 |

+

def predict_image(model, image):

|

| 217 |

+

"""

|

| 218 |

+

Predicts the class of a single image.

|

| 219 |

+

Args:

|

| 220 |

+

model: The trained neural network model.

|

| 221 |

+

image: A PIL Image or NumPy array.

|

| 222 |

+

Returns:

|

| 223 |

+

prediction (str): The predicted class label ('cat' or 'dog').

|

| 224 |

+

"""

|

| 225 |

+

# Preprocess the image

|

| 226 |

+

if isinstance(image, Image.Image):

|

| 227 |

+

image = image.resize((28, 28)).convert('L')

|

| 228 |

+

image = np.array(image).astype('float32') / 255.0

|

| 229 |

+

elif isinstance(image, np.ndarray):

|

| 230 |

+

if image.shape != (28, 28):

|

| 231 |

+

image = Image.fromarray(image).resize((28, 28)).convert('L')

|

| 232 |

+

image = np.array(image).astype('float32') / 255.0

|

| 233 |

+

else:

|

| 234 |

+

raise ValueError("Image must be a PIL Image or NumPy array.")

|

| 235 |

+

|

| 236 |

+

image = image.reshape(1, 1, 28, 28)

|

| 237 |

+

image_tensor = torch.tensor(image).to(device)

|

| 238 |

+

|

| 239 |

+

# Get prediction

|

| 240 |

+

model.eval()

|

| 241 |

+

with torch.no_grad():

|

| 242 |

+

output = model(image_tensor)

|

| 243 |

+

_, predicted = torch.max(output.data, 1)

|

| 244 |

+

return 'cat' if predicted.item() == 0 else 'dog'

|

| 245 |

+

|

| 246 |

+

def visualize_predictions(model, test_loader, num_images=8):

|

| 247 |

+

"""

|

| 248 |

+

Visualizes sample predictions from the test set.

|

| 249 |

+

Args:

|

| 250 |

+

model: The trained neural network model.

|

| 251 |

+

test_loader: DataLoader for the test data.

|

| 252 |

+

num_images (int): Number of images to display.

|

| 253 |

+

"""

|

| 254 |

+

model.eval()

|

| 255 |

+

dataiter = iter(test_loader)

|

| 256 |

+

images, labels = next(dataiter) # Use the built-in next() function

|

| 257 |

+

|

| 258 |

+

images = images.to(device)

|

| 259 |

+

labels = labels.to(device)

|

| 260 |

+

|

| 261 |

+

# Get predictions

|

| 262 |

+

outputs = model(images)

|

| 263 |

+

_, predicted = torch.max(outputs, 1)

|

| 264 |

+

|

| 265 |

+

# Move images to CPU for plotting

|

| 266 |

+

images = images.cpu().numpy()

|

| 267 |

+

predicted = predicted.cpu().numpy()

|

| 268 |

+

labels = labels.cpu().numpy()

|

| 269 |

+

|

| 270 |

+

# Plot the images with predicted and true labels

|

| 271 |

+

fig = plt.figure(figsize=(10, 4))

|

| 272 |

+

for idx in range(num_images):

|

| 273 |

+

ax = fig.add_subplot(2, num_images // 2, idx+1)

|

| 274 |

+

img = images[idx][0]

|

| 275 |

+

ax.imshow(img, cmap='gray')

|

| 276 |

+

pred_label = 'cat' if predicted[idx] == 0 else 'dog'

|

| 277 |

+

true_label = 'cat' if labels[idx] == 0 else 'dog'

|

| 278 |

+

ax.set_title(f'Pred: {pred_label}\nTrue: {true_label}')

|

| 279 |

+

ax.axis('off')

|

| 280 |

+

plt.tight_layout()

|

| 281 |

+

plt.savefig('sample_predictions.png')

|

| 282 |

+

print('Sample predictions saved to sample_predictions.png')

|

| 283 |

+

|

| 284 |

+

def main():

|

| 285 |

+

# Load configuration

|

| 286 |

+

config = load_config()

|

| 287 |

+

|

| 288 |

+

# Step 1: Download the data

|

| 289 |

+

download_quickdraw_data()

|

| 290 |

+

|

| 291 |

+

# Step 2: Load and preprocess the data

|

| 292 |

+

train_loader, test_loader = load_and_preprocess_data(num_samples=config['num_samples'])

|

| 293 |

+

|

| 294 |

+

# Step 3: Initialize the model

|

| 295 |

+

model = SimpleCNN().to(device)

|

| 296 |

+

|

| 297 |

+

# Step 4: Train the model

|

| 298 |

+

train_model(model, train_loader, num_epochs=config['num_epochs'], learning_rate=config['learning_rate'])

|

| 299 |

+

|

| 300 |

+

# Step 5: Evaluate the model

|

| 301 |

+

evaluate_model(model, test_loader)

|

| 302 |

+

|

| 303 |

+

# Step 6: Visualize sample predictions

|

| 304 |

+

visualize_predictions(model, test_loader, num_images=8)

|

| 305 |

+

|

| 306 |

+

# Step 7: Save the model

|

| 307 |

+

save_model(model, config['model_save_path'])

|

| 308 |

+

|

| 309 |

+

if __name__ == '__main__':

|

| 310 |

+

main()

|