Update readme

#2

by

takuma104

- opened

- README.md +117 -1

- images/mask.png +0 -0

- images/original.png +0 -0

- images/output.png +0 -0

- sd.png +0 -0

README.md

CHANGED

|

@@ -10,4 +10,120 @@ duplicated_from: ControlNet-1-1-preview/control_v11p_sd15_inpaint

|

|

| 10 |

|

| 11 |

# Controlnet - v1.1 - *InPaint Version*

|

| 12 |

|

| 13 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 10 |

|

| 11 |

# Controlnet - v1.1 - *InPaint Version*

|

| 12 |

|

| 13 |

+

**Controlnet v1.1** is the successor model of [Controlnet v1.0](https://huggingface.co/lllyasviel/ControlNet)

|

| 14 |

+

and was released in [lllyasviel/ControlNet-v1-1](https://huggingface.co/lllyasviel/ControlNet-v1-1) by [Lvmin Zhang](https://huggingface.co/lllyasviel).

|

| 15 |

+

|

| 16 |

+

This checkpoint is a conversion of [the original checkpoint](https://huggingface.co/lllyasviel/ControlNet-v1-1/blob/main/control_v11p_sd15_inpaint.pth) into `diffusers` format.

|

| 17 |

+

It can be used in combination with **Stable Diffusion**, such as [runwayml/stable-diffusion-v1-5](https://huggingface.co/runwayml/stable-diffusion-v1-5).

|

| 18 |

+

|

| 19 |

+

|

| 20 |

+

For more details, please also have a look at the [🧨 Diffusers docs](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion/controlnet).

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

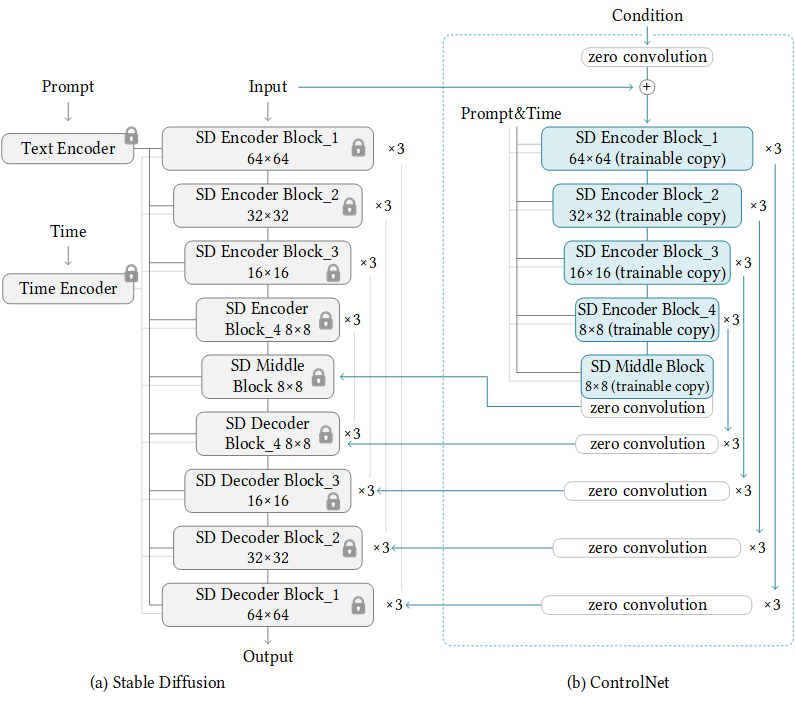

ControlNet is a neural network structure to control diffusion models by adding extra conditions.

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

This checkpoint corresponds to the ControlNet conditioned on **inpaint images**.

|

| 28 |

+

|

| 29 |

+

## Model Details

|

| 30 |

+

- **Developed by:** Lvmin Zhang, Maneesh Agrawala

|

| 31 |

+

- **Model type:** Diffusion-based text-to-image generation model

|

| 32 |

+

- **Language(s):** English

|

| 33 |

+

- **License:** [The CreativeML OpenRAIL M license](https://huggingface.co/spaces/CompVis/stable-diffusion-license) is an [Open RAIL M license](https://www.licenses.ai/blog/2022/8/18/naming-convention-of-responsible-ai-licenses), adapted from the work that [BigScience](https://bigscience.huggingface.co/) and [the RAIL Initiative](https://www.licenses.ai/) are jointly carrying in the area of responsible AI licensing. See also [the article about the BLOOM Open RAIL license](https://bigscience.huggingface.co/blog/the-bigscience-rail-license) on which our license is based.

|

| 34 |

+

- **Resources for more information:** [GitHub Repository](https://github.com/lllyasviel/ControlNet), [Paper](https://arxiv.org/abs/2302.05543).

|

| 35 |

+

- **Cite as:**

|

| 36 |

+

|

| 37 |

+

@misc{zhang2023adding,

|

| 38 |

+

title={Adding Conditional Control to Text-to-Image Diffusion Models},

|

| 39 |

+

author={Lvmin Zhang and Maneesh Agrawala},

|

| 40 |

+

year={2023},

|

| 41 |

+

eprint={2302.05543},

|

| 42 |

+

archivePrefix={arXiv},

|

| 43 |

+

primaryClass={cs.CV}

|

| 44 |

+

}

|

| 45 |

+

## Introduction

|

| 46 |

+

|

| 47 |

+

Controlnet was proposed in [*Adding Conditional Control to Text-to-Image Diffusion Models*](https://arxiv.org/abs/2302.05543) by

|

| 48 |

+

Lvmin Zhang, Maneesh Agrawala.

|

| 49 |

+

|

| 50 |

+

The abstract reads as follows:

|

| 51 |

+

|

| 52 |

+

*We present a neural network structure, ControlNet, to control pretrained large diffusion models to support additional input conditions.

|

| 53 |

+

The ControlNet learns task-specific conditions in an end-to-end way, and the learning is robust even when the training dataset is small (< 50k).

|

| 54 |

+

Moreover, training a ControlNet is as fast as fine-tuning a diffusion model, and the model can be trained on a personal devices.

|

| 55 |

+

Alternatively, if powerful computation clusters are available, the model can scale to large amounts (millions to billions) of data.

|

| 56 |

+

We report that large diffusion models like Stable Diffusion can be augmented with ControlNets to enable conditional inputs like edge maps, segmentation maps, keypoints, etc.

|

| 57 |

+

This may enrich the methods to control large diffusion models and further facilitate related applications.*

|

| 58 |

+

|

| 59 |

+

## Example

|

| 60 |

+

|

| 61 |

+

It is recommended to use the checkpoint with [Stable Diffusion v1-5](https://huggingface.co/runwayml/stable-diffusion-v1-5) as the checkpoint

|

| 62 |

+

has been trained on it.

|

| 63 |

+

Experimentally, the checkpoint can be used with other diffusion models such as dreamboothed stable diffusion.

|

| 64 |

+

|

| 65 |

+

**Note**: If you want to process an image to create the auxiliary conditioning, external dependencies are required as shown below:

|

| 66 |

+

1. Install https://github.com/patrickvonplaten/controlnet_aux

|

| 67 |

+

```sh

|

| 68 |

+

$ pip install controlnet_aux==0.3.0

|

| 69 |

+

```

|

| 70 |

+

2. Let's install `diffusers` and related packages:

|

| 71 |

+

```

|

| 72 |

+

$ pip install diffusers transformers accelerate

|

| 73 |

+

```

|

| 74 |

+

3. Run code:

|

| 75 |

+

```python

|

| 76 |

+

import torch

|

| 77 |

+

import os

|

| 78 |

+

from diffusers.utils import load_image

|

| 79 |

+

from PIL import Image

|

| 80 |

+

import numpy as np

|

| 81 |

+

from diffusers import (

|

| 82 |

+

ControlNetModel,

|

| 83 |

+

StableDiffusionControlNetPipeline,

|

| 84 |

+

UniPCMultistepScheduler,

|

| 85 |

+

)

|

| 86 |

+

checkpoint = "lllyasviel/control_v11p_sd15_inpaint"

|

| 87 |

+

original_image = load_image(

|

| 88 |

+

"https://huggingface.co/lllyasviel/control_v11p_sd15_inpaint/resolve/main/images/original.png"

|

| 89 |

+

)

|

| 90 |

+

mask_image = load_image(

|

| 91 |

+

"https://huggingface.co/lllyasviel/control_v11p_sd15_inpaint/resolve/main/images/mask.png"

|

| 92 |

+

)

|

| 93 |

+

|

| 94 |

+

def make_inpaint_condition(image, image_mask):

|

| 95 |

+

image = np.array(image.convert("RGB")).astype(np.float32) / 255.0

|

| 96 |

+

image_mask = np.array(image_mask.convert("L"))

|

| 97 |

+

assert image.shape[0:1] == image_mask.shape[0:1], "image and image_mask must have the same image size"

|

| 98 |

+

image[image_mask < 128] = -1.0 # set as masked pixel

|

| 99 |

+

image = np.expand_dims(image, 0).transpose(0, 3, 1, 2)

|

| 100 |

+

image = torch.from_numpy(image)

|

| 101 |

+

return image

|

| 102 |

+

|

| 103 |

+

control_image = make_inpaint_condition(original_image, mask_image)

|

| 104 |

+

prompt = "best quality"

|

| 105 |

+

negative_prompt="lowres, bad anatomy, bad hands, cropped, worst quality"

|

| 106 |

+

controlnet = ControlNetModel.from_pretrained(checkpoint, torch_dtype=torch.float16)

|

| 107 |

+

pipe = StableDiffusionControlNetPipeline.from_pretrained(

|

| 108 |

+

"runwayml/stable-diffusion-v1-5", controlnet=controlnet, torch_dtype=torch.float16

|

| 109 |

+

)

|

| 110 |

+

pipe.scheduler = UniPCMultistepScheduler.from_config(pipe.scheduler.config)

|

| 111 |

+

pipe.enable_model_cpu_offload()

|

| 112 |

+

generator = torch.manual_seed(2)

|

| 113 |

+

image = pipe(prompt, negative_prompt=negative_prompt, num_inference_steps=30,

|

| 114 |

+

generator=generator, image=control_image).images[0]

|

| 115 |

+

image.save('images/output.png')

|

| 116 |

+

```

|

| 117 |

+

|

| 118 |

+

|

| 119 |

+

|

| 120 |

+

## Other released checkpoints v1-1

|

| 121 |

+

The authors released 14 different checkpoints, each trained with [Stable Diffusion v1-5](https://huggingface.co/runwayml/stable-diffusion-v1-5)

|

| 122 |

+

on a different type of conditioning:

|

| 123 |

+

| Model Name | Control Image Overview| Control Image Example | Generated Image Example |

|

| 124 |

+

|---|---|---|---|

|

| 125 |

+

TODO

|

| 126 |

+

### Training

|

| 127 |

+

TODO

|

| 128 |

+

### Blog post

|

| 129 |

+

For more information, please also have a look at the [Diffusers ControlNet Blog Post](https://huggingface.co/blog/controlnet).

|

images/mask.png

ADDED

|

images/original.png

ADDED

|

images/output.png

ADDED

|

sd.png

ADDED

|