You need to agree to share your contact information to access this model

This repository is publicly accessible, but you have to accept the conditions to access its files and content.

The collected information will help acquire a better knowledge of pyannote.audio userbase and help its maintainers improve it further. Though this pipeline uses MIT license and will always remain open-source, we will occasionnally email you about premium pipelines and paid services around pyannote.

Log in or Sign Up to review the conditions and access this model content.

Using this open-source pipeline in production?

Consider switching to pyannoteAI for better and faster options.

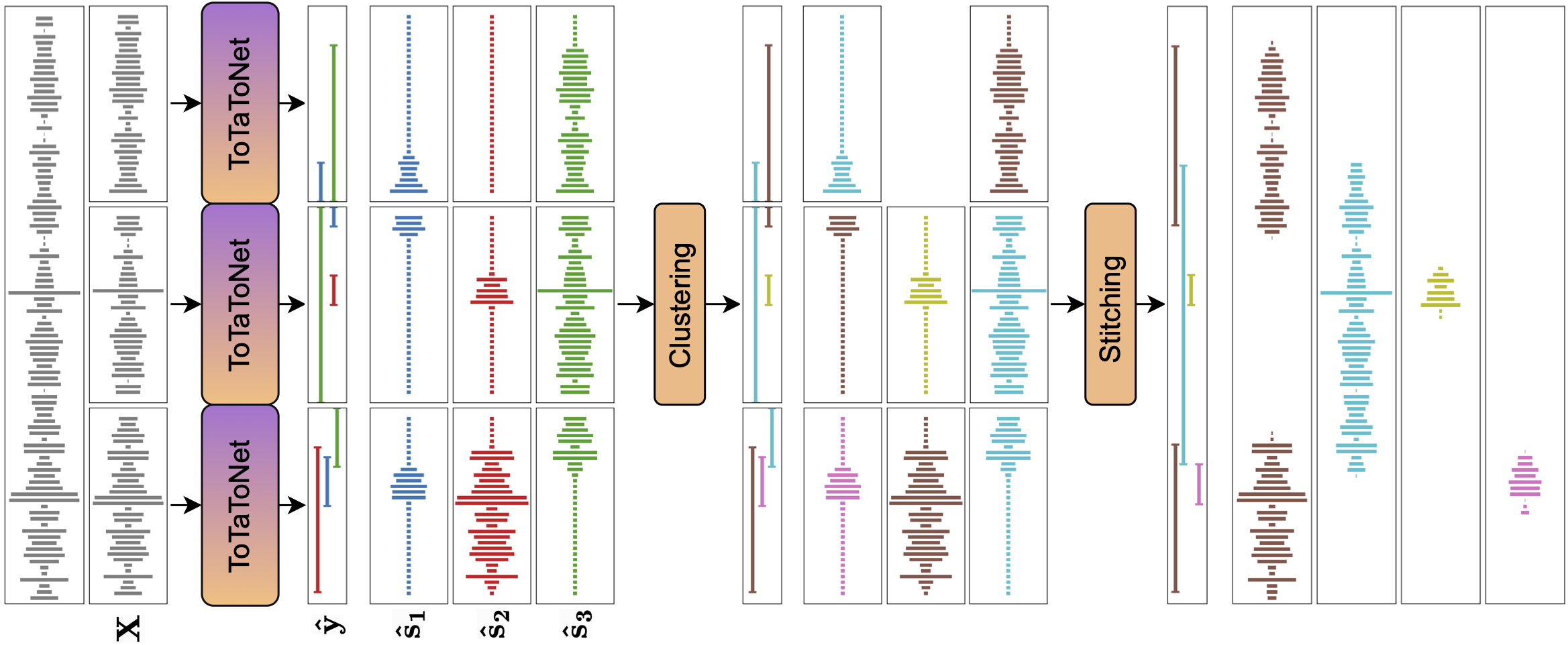

🎹 PixIT / joint speaker diarization and speech separation

This pipeline ingests mono audio sampled at 16kHz and outputs speaker diarization as an Annotation instance and speech separation as a SlidingWindowFeature.

Audio files sampled at a different rate are resampled to 16kHz automatically upon loading.

It has been trained by Joonas Kalda with pyannote.audio 3.3.0 using the AMI dataset (single distant microphone, SDM). These paper and companion repository describe the approach in more details.

Requirements

- Install

pyannote.audio3.3.0withpip install pyannote.audio[separation]==3.3.0 - Accept

pyannote/separation-ami-1.0user conditions - Accept

pyannote/speech-separation-ami-1.0user conditions - Create access token at

hf.co/settings/tokens.

Usage

# instantiate the pipeline

from pyannote.audio import Pipeline

pipeline = Pipeline.from_pretrained(

"pyannote/speech-separation-ami-1.0",

use_auth_token="HUGGINGFACE_ACCESS_TOKEN_GOES_HERE")

# run the pipeline on an audio file

diarization, sources = pipeline("audio.wav")

# dump the diarization output to disk using RTTM format

with open("audio.rttm", "w") as rttm:

diarization.write_rttm(rttm)

# dump sources to disk as SPEAKER_XX.wav files

import scipy.io.wavfile

for s, speaker in enumerate(diarization.labels()):

scipy.io.wavfile.write(f'{speaker}.wav', 16000, sources.data[:,s])

Processing on GPU

pyannote.audio pipelines run on CPU by default.

You can send them to GPU with the following lines:

import torch

pipeline.to(torch.device("cuda"))

Processing from memory

Pre-loading audio files in memory may result in faster processing:

waveform, sample_rate = torchaudio.load("audio.wav")

diarization = pipeline({"waveform": waveform, "sample_rate": sample_rate})

Monitoring progress

Hooks are available to monitor the progress of the pipeline:

from pyannote.audio.pipelines.utils.hook import ProgressHook

with ProgressHook() as hook:

diarization = pipeline("audio.wav", hook=hook)

Citations

@inproceedings{Kalda24,

author={Joonas Kalda and Clément Pagés and Ricard Marxer and Tanel Alumäe and Hervé Bredin},

title={{PixIT: Joint Training of Speaker Diarization and Speech Separation from Real-world Multi-speaker Recordings}},

year=2024,

booktitle={Proc. Odyssey 2024},

}

@inproceedings{Bredin23,

author={Hervé Bredin},

title={{pyannote.audio 2.1 speaker diarization pipeline: principle, benchmark, and recipe}},

year=2023,

booktitle={Proc. INTERSPEECH 2023},

}

- Downloads last month

- 80,323